Todays experiment is to find the best open source LLM model that runs locally on my MacBook Pro. All without having a huge additional cognitive load on what to pick. Qwen3.5-35B-A3B looked like the ideal new candidate to pick for my use case, but I was not sure it would run on my MacBook Pro.

View this post on X

However a feeling of fear came over me, what if my Mac is not capable and I would find out my expensive machine is insufficient. I was never able to figure out what the best model available was that could run on my specific hardware. The manual path required scraping the model list, investigating my hardware specs and matching these constraints manually.

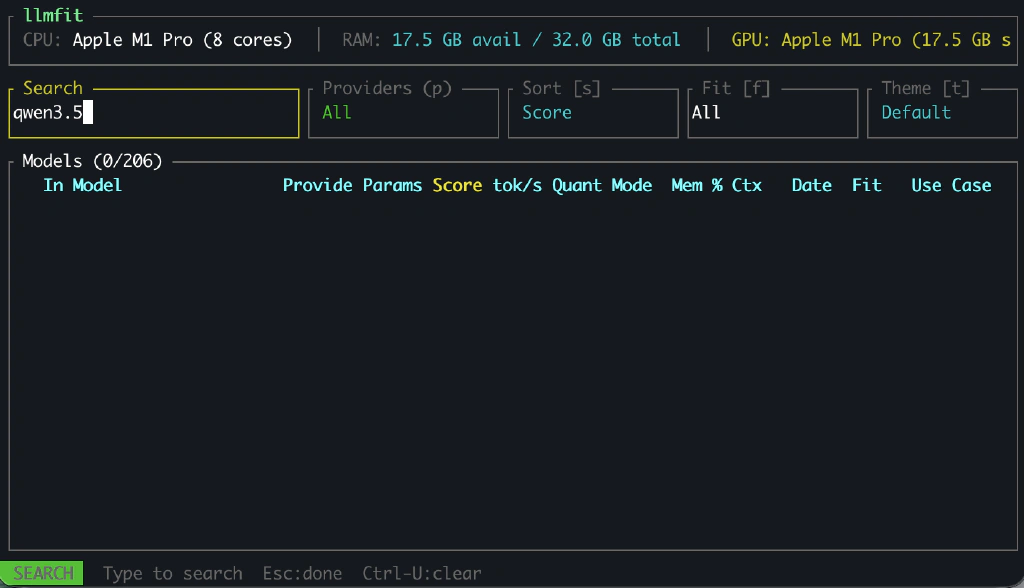

So I started searching for tools to help me and found llmfit on GitHub. A tool that scans my hardware and then proposes the best fitting AI model. Could it really give me a ranked, hardware-aware recommendation so I wouldn’t have to think? What I really wanted was a deterministic answer.

So I installed llmfit on my MacBook Pro via HomeBrew to try it out.

brew tap AlexsJones/llmfit

brew install llmfit

After installation, I searched for Qwen3.5 but couldn’t find it. If the list is incomplete I would still be guessing. It should have the latest and greatest models showing up, otherwise I still would be worried I have not the best model for the job. It would just add to my cognitive load problem this way.

So the next step was to ask ChatGPT how to fix this. First we struggled to make sure I could manually make a new build. Then I had to add the Qwen3.5 models manually. This worked eventually, but after running the newly built llmfit binary it still didn’t show Qwen3.5 models.

I continued my struggle to get it to work, and eventually ChatGPT figured out why this was still happening:

Your hf_models.json does contain Qwen 3.5, but the llmfit UI is filtering them out at runtime. This means the problem is no longer the scraper. It is the frontend filtering logic in llmfit-tui.

Qwen 3.5 models currently have: pipeline_tag =

image-text-to-textllmfit primarily targets:text-generationSo they are silently excluded.

At that point I was ready to give up. It seemed llmfit is not the tool that was solving my problen. It is becoming more common to use multi-modal models for text generation.

Then I just took a leap of faith and installed Qwen3.5-35B-A3B via ollama on my local machine. All without knowing for sure it would work and was the right model for the job. It worked fine…

Key Lesson: I Keep Making the Same Iteration Mistakes

I tried to remove the cognitive load of picking a system manually. It started to complicate things pretty quickly. The fastest way to reduce my cognitive load however was to just test the model directly. Iteration is beating evaluation again. I keep trying to design systems to prevent mistakes. But often the cheapest feedback loop is simply to try. This pattern keeps repeating itself.