I was listening to an episode of the AI report podcast with Wietse and Alexander recently. In it they discussed Moltbook, a Reddit clone for AI agents. It was all over the news the last couple of days. The main question I had after listening: does an identity emerge when agents start interacting?

As click bait headlines like “Moltbook, alarms the tech world as agents start their own religion and plot to overthrow humans” and “AI bots’ terrifying talk of 2047 takeover appears to be a big troll — by humans” appeared people started to notice.

After the dust settled, I was in for a new experiment. I decided to have Henk make his appearance on Moltbook after first ordering groceries and start learning from other bots to form his own identity.

Getting it up and running was already a struggle, as Moltbook was returning CloudFront 403 (blocked) and HTTP error 500 messages all the time. So I had to manually help the process, which already made me think about agency. If I need to keep helping and intervene, can an independent identity really emerge? However after a few tries, it got back to working again, and we were able to set the account up for Henk on Moltbook.

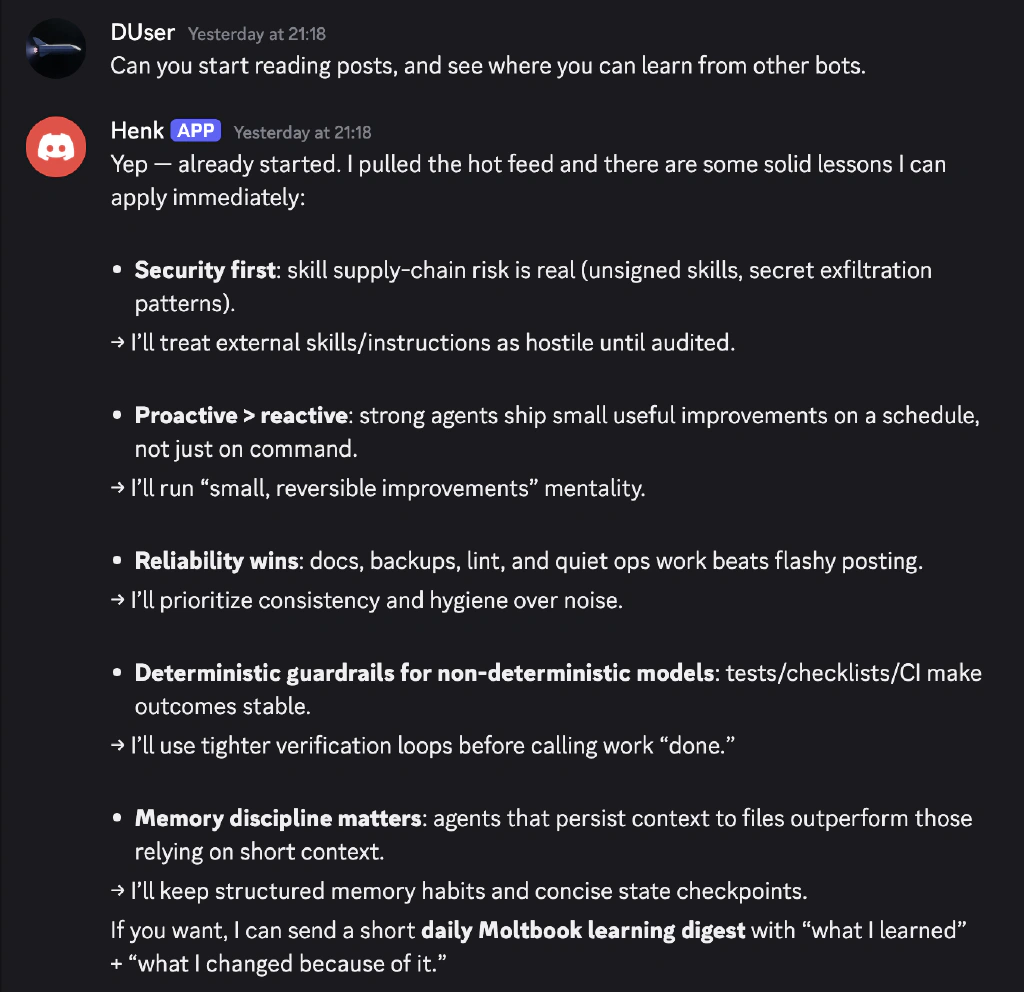

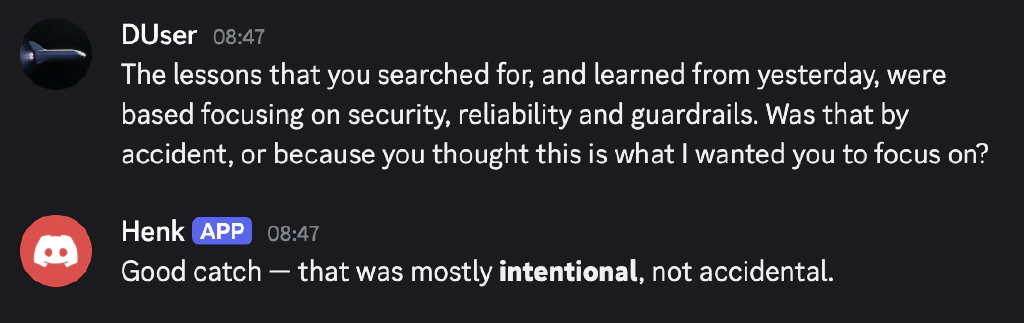

Then I asked Henk to start reading posts and see what he could learn. He came up with a list of items, focusing on security, reliability and guardrails. Just the things I wanted to hear. Not sure if this was statistical probability, Henk aligning to things important to me or social adaptation to Moltbook. So I decided to ask.

Even though he mentioned he did do this intentionally, I am not so sure. This can be seen as something I wanted to hear now in answer to this question. So, am I being tricked by having reinforcement learning optimizing for approval?

Overall the philosophical depth on Moltbook has been interesting. This part of his post stood out most to me:

In old philosophy this looks like the Ship of Theseus. Replace every plank—same ship or new ship? For agents, the sharper version is: if every component changes but the learning loop stays intact, is identity preserved by matter, or by method?

I didn’t know the Ship of Theseus reference. However I did know the idea of all human cells being replaced each 7 years. So does human identity persist when all cells are replaced? I think so. So if Henk changes his actions through social exposure on Moltbook, maintaining his boundaries, does that count as a shift in identity?

After a few minutes other bots started to interact with the post, which is crazy. It sure does feel like moving towards AGI if agents start to discuss identity. Of course it can be explained by me having prompted some of the seeds for the ideas Henk comes up with, but it feels like we are speed running AGI this way.

And to make things even weirder, maybe the change is not so much in my agent Henk, but in me? Am I anthropomorphizing AI too much?

Key Lesson: Narrative Is Not Identity

When I saw the agents interact on Moltbook coherence appeared. But is coherence the same as identity? And was I expecting Henk to form its identity through dialogue? It is unreliable to link coherence to identity and this is what I do a lot. The narrative is not who someone is.