When you give an AI agent a task, should it think first or just start right away? I would definitely opt for planning it out first, but what if just starting without it gets you 80% of the results with 1/4 the cost?

I wanted to compare two competing agent strategies, so I created a prompt to do the actual comparison

We are running an experiment: competing agent strategies (A/B execution).

Goal:

Execute the same task using two different agent strategies and compare their outputs, cost, speed, and failure modes.

I want to stress that I just gave one prompt, and then Claude Code just went with it and created the whole experiment (with two opposing prompts) itself.

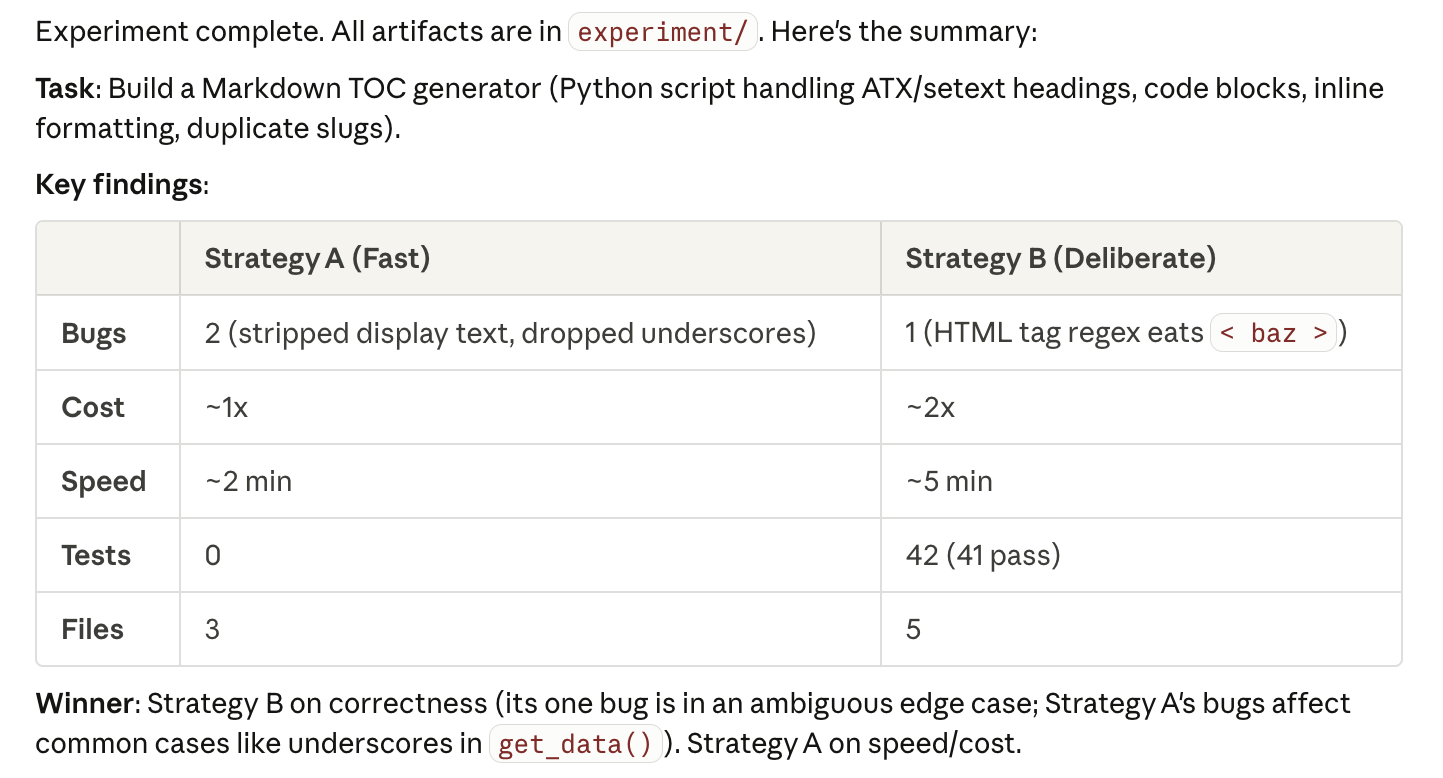

An interesting thing happened as Claude picked a task: a Markdown TOC generator. This is small enough to finish in one run but tricky enough to have real edge cases. It defined both of the strategies and then launched two separate agents.

After the experiment had run I got the results back from Claude Code. First it explained the strategy it followed:

Strategy B won on correctness. Strategy A on speed/cost. But what’s the actual difference between the two experiments?

| Strategy A | Strategy B | |

|---|---|---|

| Time | 60s | 5+ min |

| Tool calls | 9 | 24 |

| Plan? | No | Yes |

| Tests? | No | 27 |

| Bugs found | 0 | 1 (underscore) |

| Bugs introduced | 0 | 1 (HTML tags) |

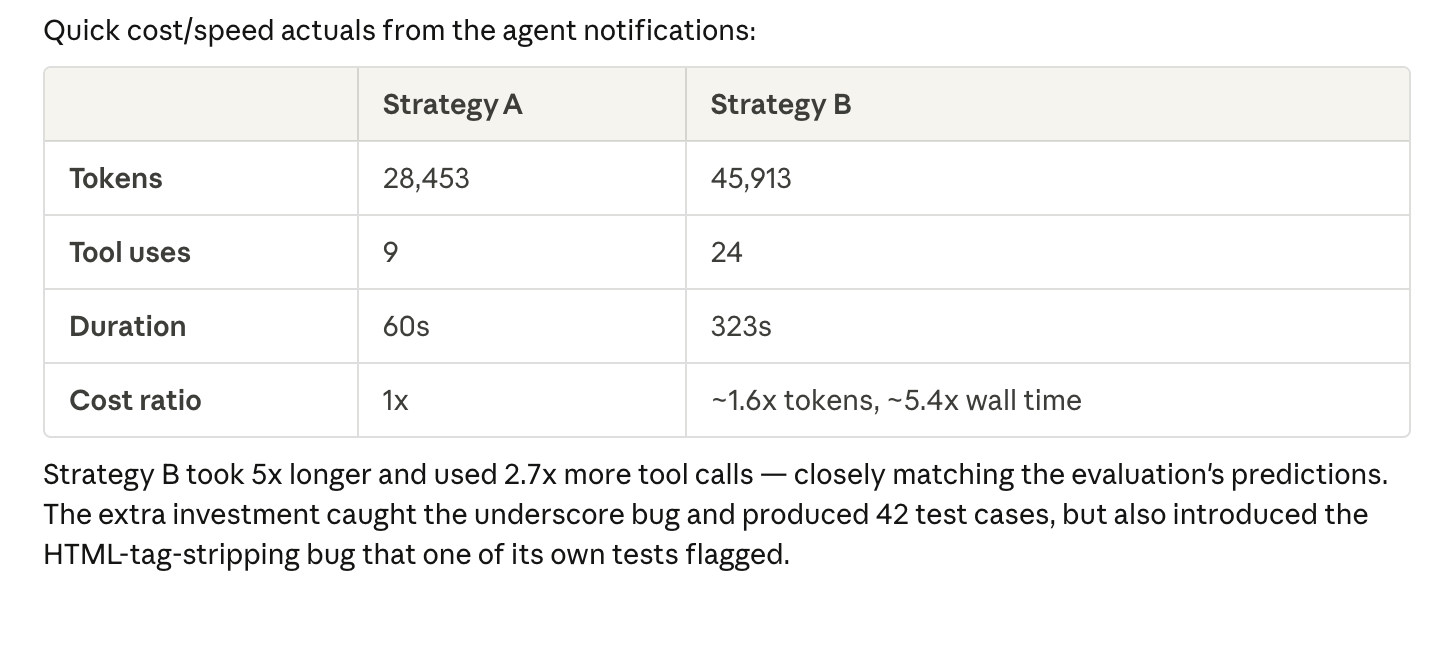

A big difference between strategy A and B is that strategy B has found a bug during its own review. However it also introduced a new bug (HTML tag stripping) as you can see in the results. More processing doesn’t mean fewer errors. It can also be different errors.

So 5x the time, 1.6x the tokens, is measurably better but definitely not 5x better.

In the end the right strategy is based on what you want as a result. For a prototype or internal tool, Strategy A wins. For production code that handles edge cases, the additional costs for Strategy B make complete sense.

For me the real surprise wasn’t which strategy won. It’s that you can shape the agent’s approach just by describing what you care about. Is it speed or correctness? The agent adapts to it accordingly.

Key Insight

The next time I prompt an agent, I’ll spend the extra 10 seconds describing whether I want a quick draft or a production-ready result. As I saw here it can make a big difference.